Blog Article

Solving the “Black Box” AI Challenge in Clinical Decision Support

The Accountability Gap in Healthcare

“Because AI said so” is never an acceptable justification for clinical or operational decisions in healthcare and life sciences.

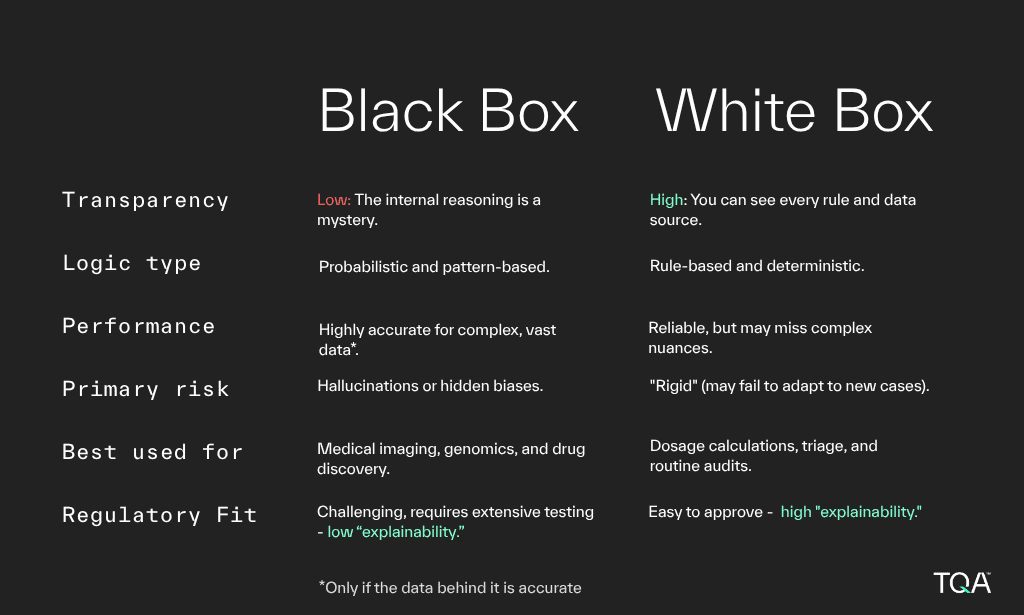

While AI is currently luring healthcare professionals, many are opting into “black box” models. These complex neural networks offer high predictive accuracy, but provide zero transparency into their decision-making logic. As such, they lack the ability to explain the specific reasoning behind an output, such as why a medical claim was flagged or why a specific patient intervention was recommended.

A Liability and Regulatory Minefield

When AI offers clear, explainable logic, clinicians feel more empowered and in control, alleviating frustration caused by hidden decision processes and building confidence in AI tools.

Regulatory bodies increasingly require transparent logic that aligns with clinical standards. If a health system cannot reproduce and justify an AI-driven decision for payers or for legal review, it is exposed to immense risk.

Not only that, but “black box” models are susceptible to hidden biases that can systematically disadvantage patients based on demographics. These biases remain invisible without an explainable logic.

Why Explainability Matters with AI in Healthcare?

At TQA, we advocate for a “White Box” approach to AI in healthcare. Unlike its counterpart, White Box AI is transparent and rule-based. It utilizes explicit “if then” rules and only uses approved inputs. It directly maps every decision to written clinical or operational documentation effectively.

This framework provides a clear explanation for every outcome (also, listen to the video below to find out why that matters):

- Regulatory Compliance: Regulatory bodies require transparent AI logic that aligns with clinical guidelines.

- Audit & Reproducibility: It must reproduce and justify every decision for payers, compliance teams, and legal reviews.

- Accountability: Clinicians must validate AI recommendations and take ownership of patient care decisions.

- Trust & Adoption: Providers won’t use systems they can’t understand or explain to patients.

- Bias & Fairness: Reveals if AI systematically disadvantages patients by race, gender, age, or insurance status.

- Continuous Improvement: Transparent logic enables targeted refinement and demonstrates ROI to leadership.

Explainability Frameworks In Practice

We discussed these explainability frameworks – and more – in a recent conversation with Children’s Health, one of the largest pediatric healthcare providers in the USA and the leading pediatric health system in North Texas. It offers actionable insights into implementing transparent AI solutions in healthcare settings. Listen now.

TQA designs agentic workflows that reflect how healthcare actually runs, shaped by deep experience across payer and provider operations.

Schedule a Consultation

We’re here to be your trusted partner in Agentic AI. You can schedule a meeting with us by using the form and we’ll be touch.

"*" indicates required fields